QC VISION

Real-time fabric defect detection on commodity edge hardware.

28ms Inference. 92% F1 Score.

LOADING MICROVIT QUANTIZED...

Real-time fabric defect detection on commodity edge hardware.

28ms Inference. 92% F1 Score.

Manual inspection relies on human visual acuity, which degrades rapidly over an 8-12 hour shift. Research indicates that defect capture rates drop from 70% in the first hour to <40% by the eighth hour due to cognitive fatigue. This inconsistency leads to a 3-5% defect rate in final output.

In a market exporting $32.6B annually, this is a $390M opportunity loss. A single "Grade D" roll shipped to a buyer like H&M or Zara can result in a claim of $3,200, wiping out the margin for an entire batch.

Imported AOI rigs (e.g., KeyeTech) solve this but cost $12,000+ per unit. They are rigid, requiring perfect lighting and massive floor space. They are economically unviable for the 4,000+ SME factories that form the backbone of the supply chain.

DEFECT REDUCTION

Pilot result: Knit-Dye Plant #3, Gazipur.

MONTHLY SAVINGS

Material saved per 10k yards daily output.

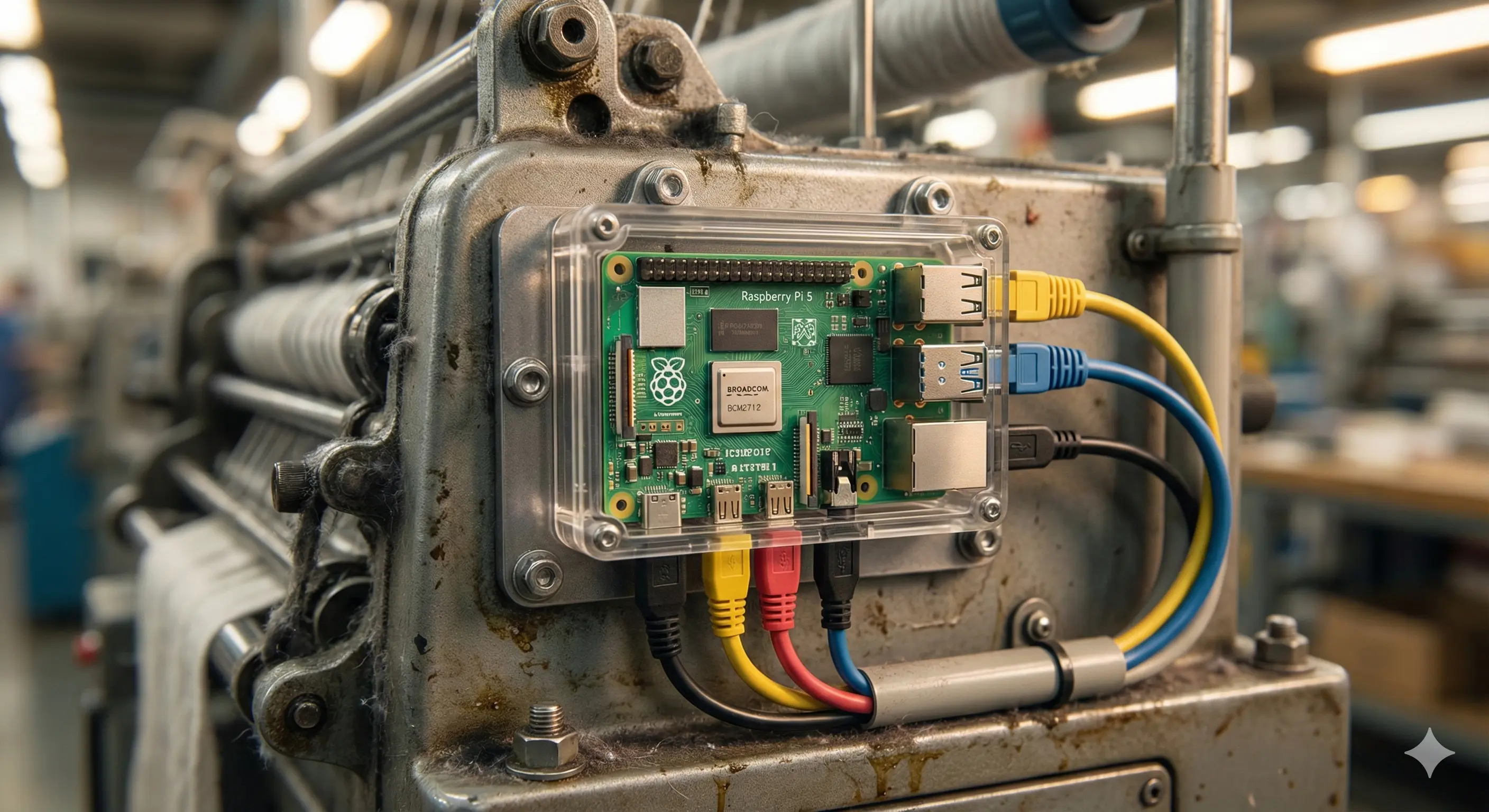

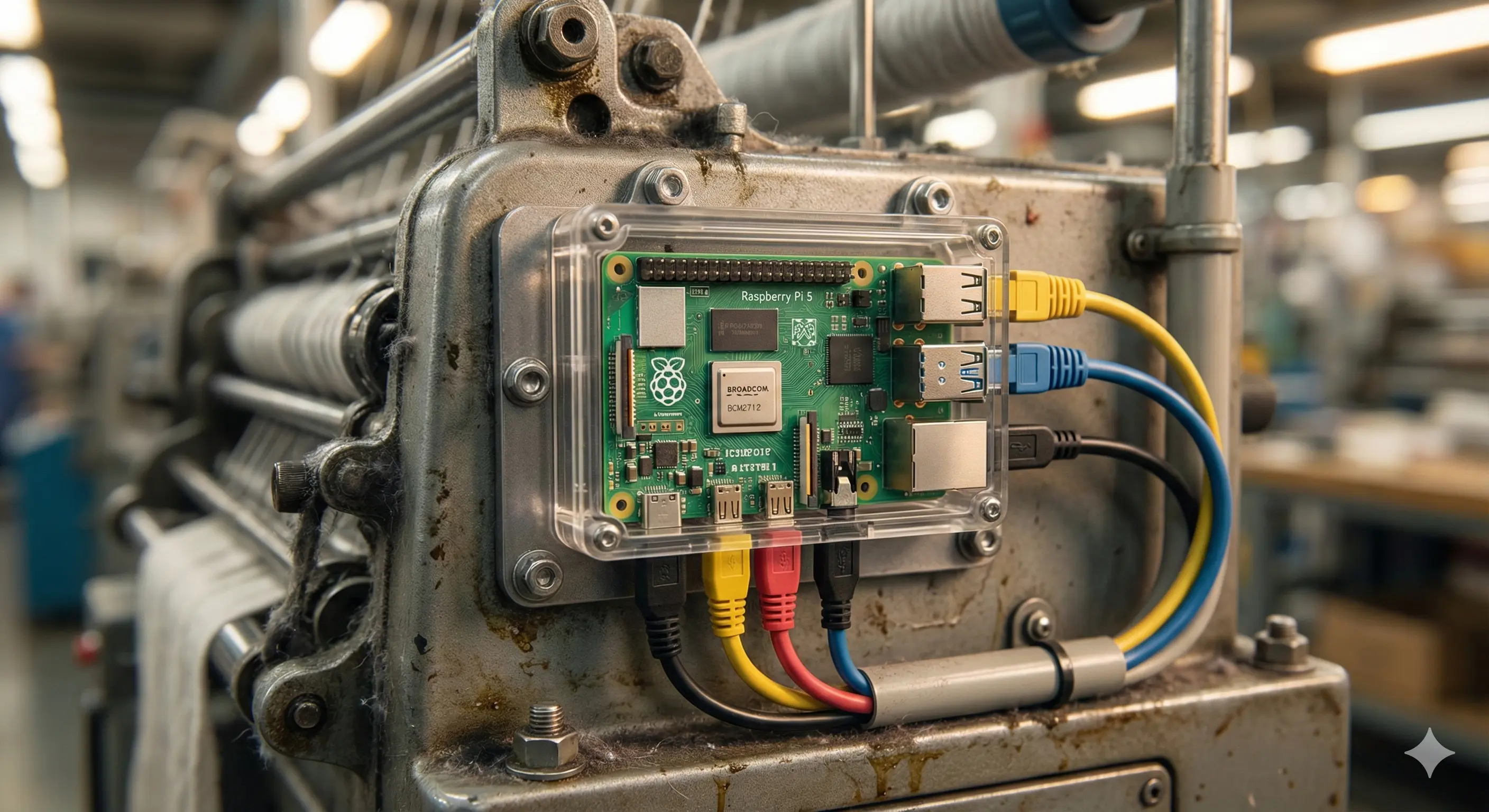

We deploy MicroViT-Tiny-Q8 on recycled smartphones ($20 BOM). Unlike humans, AI does not blink, tire, or miss.

DINO-v2 (Meta) is the gold standard for features but is computationally heavy for a $90 computer. We use MicroViT-Tiny-Q8. By quantizing to 8-bit integers, we fit the model into the L2 cache of mobile processors (Snapdragon 778G), achieving a 3.6x speedup vs ViT-Small while maintaining 91.3% accuracy on fabric texture datasets.

We replaced the heavy Segment Anything Model (SAM) with PicoSAM-2 (1.3M params). This allows "Promptable Masks"—a floor manager can "tap" a new defect type on a tablet, and the model instantly learns the boundary. This "Few-Shot Learning" adapts to new fabric styles in minutes, not weeks.

Fabric rolls move at high speed. A round trip to the cloud takes 3000ms. Our local inference takes 28ms. This ensures the machine stops before the defect is wound into the roll.

Proprietary fabric designs and worker faces are processed in RAM and discarded. No images leave the factory unless explicitly flagged for retraining, complying with Bangladesh DSA 2018.